Organizations that invest in strong data engineering foundations are able to deliver trusted analytics, enable AI initiatives, reduce operational costs, and make faster, smarter decisions.

If your data scientists spend 80% of their time cleaning messy spreadsheets and fixing broken pipelines, you are actively losing money. Your business has mountains of data, but turning that raw information into clean, accurate, and actionable insight requires far more than simply buying new tools. When your data infrastructure is weak, reports that should take five minutes can end up taking three days to build.

Your data pipelines dictate how fast you can move. Building them without a clear architectural strategy creates crippling technical debt. Your teams stop innovating and start functioning as an expensive IT support desk, spending their days patching leaks instead of building systems that drive revenue.

Data engineering is the foundation of your entire analytics and AI strategy. It is the discipline of creating systems to collect, store, and process data at scale so that it is reliable and immediately useful.

This guide provides a comprehensive, business‑ready breakdown of the top 10 data engineering best practices, helping you build a scalable, secure, and future‑proof data platform.

What Are Data Engineering Best Practices?

Data engineering best practices are proven principles and methodologies used to design, build, operate, and scale reliable data pipelines and platforms. They ensure data is accurate, available, secure, scalable, cost‑efficient, and analytics‑ready, supporting BI, AI, and operational use cases across the organization.

Why Data Engineering Is Important Today for Your Business

Feeding bad data to machine learning models creates confident hallucinations. Industry research consistently shows that poor data quality and unreliable pipelines have direct financial consequences. A report by IBM found that many organizations lose millions of dollars annually due to inaccurate, incomplete, or inconsistent data, with losses stemming from rework, operational delays, compliance risks, and misguided decision‑making.

If your pipelines are fragile, your reports are inaccurate. If your reports are inaccurate, your leadership team makes poor decisions. The cost of bad data compounds at every stage of your business. In such a scenario, you need top‑tier data engineering services to design resilient pipelines and stop the data breakdown.

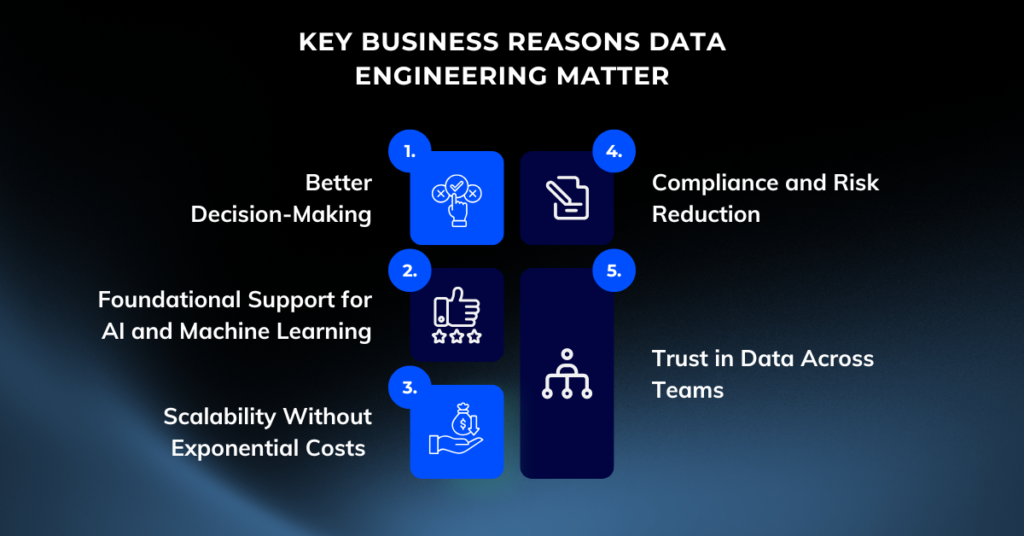

Key Business Reasons Data Engineering Matter

1. Better Decision‑Making

Executives rely on dashboards, reports, and predictive models. When pipelines break or data quality is inconsistent, decisions are delayed or worse, based on incorrect information.

2. Foundational Support for AI and Machine Learning

AI systems depend on clean, timely, and well‑governed data. Poor data engineering is one of the most common reasons AI initiatives fail to move beyond pilots.

3. Scalability Without Exponential Costs

As data volumes grow, poorly designed systems become expensive and slow. Best practices ensure performance scales predictably with business growth.

4. Compliance and Risk Reduction

With regulations such as GDPR, HIPAA, and industry‑specific standards, strong governance and security are no longer optional.

5. Trust in Data Across Teams

When stakeholders trust data, adoption increases. When they don’t, shadow systems and manual workarounds proliferate.

In short, data engineering best practices transform data from a liability into a competitive asset.

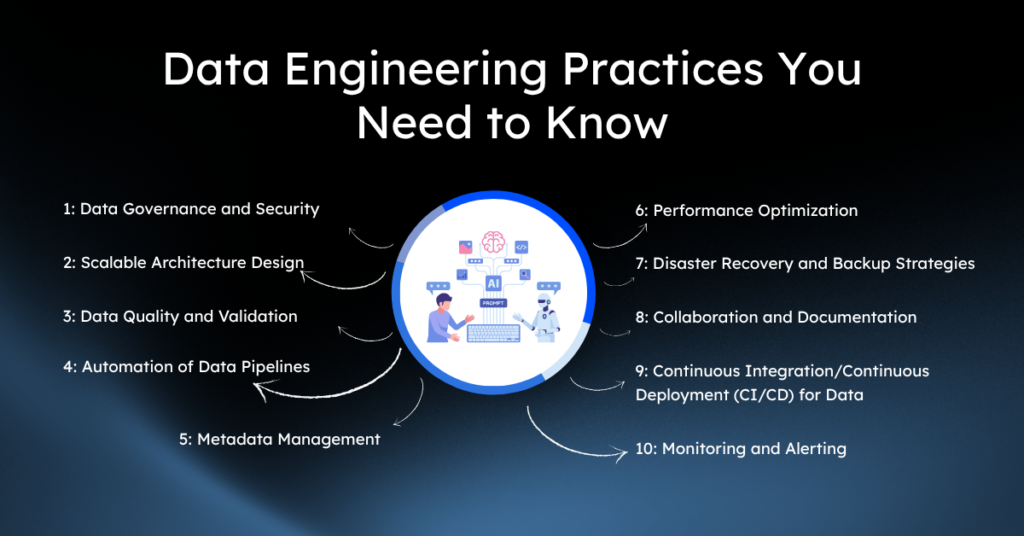

10 Data Engineering Best Practices for Your Business Growth

Best Practice 1: Data Governance and Security

The Risk: Unchecked Data Access

Unregulated data access is a massive regulatory risk. If you cannot easily identify who has access to your sensitive customer information, you are vulnerable to fines, data breaches, and reputational damage. Treating data governance as an afterthought or a procedural hurdle always leads to panic when an auditor knocks on your door.

The Resolution: Embed Governance from Day One

You must embed security and governance directly into your data pipelines from day one.

- Classify sensitive data the moment it enters your system.

- Implement role-based access control (RBAC) at the row and column levels inside your data warehouses, whether you use Snowflake or BigQuery.

- Use data contracts to lock down schemas so producers and consumers agree on exactly what the data looks like. This prevents upstream changes from silently breaking downstream dashboards.

Best Practice 2: Scalable Architecture Design

The Risk: Crippling Technical Debt

Moving too quickly without proper architectural design always leads to crippling technical debt down the road. Hard-coding monolithic pipelines tightly couples your storage to your compute. When your data volume grows from 10 gigabytes to 10 terabytes, those rigid systems buckle, and your infrastructure costs spiral out of control.

The Resolution: Build for Scale

Build cloud-native, distributed systems that scale horizontally.

- Decouple your storage from your compute so you can pay for exactly what you use.

- Adopt an Extract, Load, Transform (ELT) pattern instead of traditional ETL.

- Load raw data directly into a cloud data warehouse first, using its massive computing power to handle the transformations. This prevents your ingestion tools from becoming a bottleneck.

A well‑designed architecture ensures your platform evolves with your business, not against it.

Best Practice 3: Implement Strong Data Quality and Validation

The Risk: Eroding Trust with Bad Data

Bad data drains your resources. When executives cannot trust the metrics on their dashboards, they revert to gut feelings, rendering your entire data infrastructure useless. Fixing data errors manually after they reach production is a fast track to wasted resources and endless frustration.

The Resolution: Automate Quality Checks

Implement automated data quality checks before the data ever reaches your business users.

- Test for null values, uniqueness, and accepted ranges at the transformation layer.

- Quarantine failing datasets automatically and alert the engineering team.

- Use tools like dbt to write these validation rules as simple SQL tests. Catch the errors early, or they will infect every downstream system you operate.

Best Practice 4: Build Reliable and Automated Data Pipelines

The Risk: Suffocating Engineers with Manual Work

Relying on manual scripts to move and clean data will suffocate your engineering team. When engineers have to manually trigger jobs or fix brittle cron jobs every morning, they burn through capital doing repetitive administrative work. Human intervention in data movement introduces human error.

The Resolution: Automate with Orchestration Tools

Automate your pipelines using dedicated orchestration tools like Apache Airflow, Dagster, or managed services like TROCCO.

- Define workflows as code to handle scheduling, dependency management, and retries automatically.

- Configure automatic retries for failed jobs.

- Set up alerts to page the right engineer when a pipeline fails. Automation removes the friction from data delivery and ensures your business gets fresh numbers on a predictable schedule.

Best practice 5: Establish Metadata Management

The Risk: Creating a Data Swamp

Searching for context kills productivity. When analysts do not know what a specific column means or where a dataset originated, they cannot do their jobs. A data lake without proper metadata management rapidly devolves into a toxic data swamp where information goes to die.

The Resolution: Treat Metadata as a First-Class Citizen

Implement a data catalog to track data lineage, definitions, and ownership.

- Use platforms like Atlan or Decube to extract this context automatically, showing you exactly how data flows from your CRM to your final financial reports.

- Ensure your catalog provides the exact lineage for any metric instantly.

- Build transparency to accelerate onboarding and build trust across the organization.

Best Practice 6: Performance Optimization

The Risk: Spiraling Cloud Bills

Writing sloppy queries and ignoring indexing causes spiraling cloud bills. When you query massive datasets without filtering or partitioning, your compute engines scan terabytes of unnecessary information. You end up paying thousands of dollars for simple reports that take hours to run.

The Resolution: Structure Data for Efficient Queries

Structure your data to match how your business queries it.

- Use partitioning to divide large tables into smaller, manageable sections based on common filters, like date or region.

- Apply clustering and indexing where appropriate to accelerate lookups.

- Regularly audit your warehouse to identify and kill expensive, long-running queries that deliver little business value.

Best Practice 7: Disaster Recovery and Backup Strategies

The Risk: Catastrophic Data Loss

Data loss destroys businesses. Storing your critical business history in a single location with no backup strategy is a catastrophic failure waiting to happen. Whether through malicious ransomware, accidental deletion, or regional cloud outages, losing your data pipeline configurations and raw data will halt your operations completely.

The Resolution: Plan for Failure

Establish strict Recovery Point Objectives (RPO) and Recovery Time Objectives (RTO).

- Replicate your critical data across different geographic regions.

- Use version control for all your pipeline code and infrastructure definitions, so you can rebuild your entire environment from scratch in minutes.

- Run regular disaster recovery drills. A backup plan that you have never tested is not a backup plan – it is a liability.

Best Practice 8: Collaboration and Documentation

The Risk: Creating Single Points of Failure

Tribal knowledge creates severe single points of failure. When only one engineer understands how the billing pipeline works, your entire revenue reporting process breaks the moment they go on vacation or leave the company. Poor communication between data producers and data consumers always leads to misaligned expectations.

The Resolution: Document and Communicate

Force your teams to document as they build and treat data engineers as strategic partners, not ticket-takers.

- Keep documentation close to the code, preferably inside the repository or directly in your data catalog.

- Establish clear service-level agreements (SLAs) for data delivery, so the marketing team knows exactly when their daily metrics will be ready.

- Promote clear communication to prevent the endless cycle of blame when a number looks wrong.

Best Practice 9: Continuous Integration/Continuous Deployment (CI/CD) for Data

The Risk: Breaking Production with Untested Code

Deploying untested data code directly to production breaks dashboards and corrupts historical records. If your team makes changes to a SQL transformation without testing it against real data, they will inevitably introduce silent errors that take weeks to notice.

The Resolution: Apply CI/CD Discipline

Apply standard software engineering CI/CD practices to your data pipelines.

- Use Git for version control and require peer code reviews for every pipeline change.

- Set up automated testing environments where changes are validated against a sample of production data before they are merged.

- Stop deployments automatically if code fails tests. This discipline prevents broken logic from infecting your live environment.

Best Practice 10: Monitoring and Alerting

The Risk: Losing Trust from Silent Failures

Silent pipeline failures erode business trust entirely. If your marketing team discovers a broken dashboard before your engineering team does, you have already failed. Relying on end-users to report missing data turns your stakeholders into an unpaid QA team.

The Resolution: Deploy Comprehensive Data Observability

Deploy tools to track data freshness, volume, and schema drift.

- Monitor for business anomalies, not just hard software crashes. Alert your team when the volume of daily transactions drops by 40% unexpectedly.

- Tie technical alerts directly to business KPIs.

- Provide immediate context in alerts so engineers can diagnose and fix issues before the business even notices.

Get Your Data Engineering Right

To deploy comprehensive data observability, you need a solid foundation in data engineering. This includes:

- Data modeling: ensuring that your data is structured and organized in a way that makes sense for your business needs.

- Data pipelines: setting up automated processes to efficiently move and transform data from multiple sources to its final destination.

- Data warehousing: storing large amounts of data in a central location for easy access and analysis.

- Data governance: implementing policies and procedures to ensure the accuracy, security, and privacy of your data.

By investing time and resources into building a strong data engineering infrastructure, you can set yourself up for success when it comes to monitoring and maintaining the health of your data.

From Data Complexity to Clarity: Why Businesses Choose Enlight Lab

Enlight Lab delivers robust solutions tailored to the complexities of modern data ecosystems. With a focus on precision and efficiency, we empower organizations to implement seamless data observability and engineering practices. Our expertise spans from designing scalable architectures to deploying cutting-edge monitoring tools that align with your business objectives.

- Proven Expertise: Our team of seasoned data engineers has extensive experience in implementing observability strategies that eliminate silos and drive actionable insights.

- Custom Solutions: Enlight Lab takes a client-focused approach, crafting solutions that adapt to your unique data workflows and organizational structure.

- Scalability at Core: Whether you’re dealing with terabytes or petabytes of data, our scalable solutions ensure maximum performance without compromise.

- Business-Driven Approach: We bridge the gap between technical excellence and business value, making sure every engineering effort ties back to measurable KPIs.

By choosing Enlight Lab, you gain a trusted partner ready to strengthen your data infrastructure and future-proof your operations. When operational efficiency and innovation are critical, Enlight Lab is the partner you can rely on.

Stop Patching Pipelines! Building a Future-Ready Data Foundation with Enlight Lab

Strong data engineering is the backbone of modern digital businesses.

You cannot scale a business on top of a brittle data infrastructure. Every hour your team spends wrestling with manual extracts, untangling broken dependencies, or arguing over metric definitions is an hour stolen from actual strategic execution. Continuing to operate without strict data engineering disciplines is a fast track to wasted capital and lost market share.

By adopting these data engineering best practices, you can transform raw data into reliable, scalable, and strategic assets that power analytics, AI, and long‑term growth. Start by locking down your governance and establishing clear data contracts. Automate the most painful manual processes first.

Ready to implement disciplined data engineering practices? Stop wasting budget on inefficient pipelines and fragile systems. Partner with Enlight Lab to build a scalable, intelligent data platform that drives consistent growth and unlocks your full market potential.

Frequently Asked Question (FAQ)

Data engineering best practices are standardized methods used to design, build, and maintain reliable, scalable, secure, and high‑performance data pipelines that support analytics, AI, and business decision‑making. These practices ensure data quality, governance, cost efficiency, and long‑term system reliability.

Data engineering best practices ensure that your data infrastructure is robust, reliable, and scalable. By automating processes, enforcing quality standards, and eliminating inefficiencies, your team can focus on delivering actionable insights and enabling data-driven decision-making, which directly impacts business growth.

Broken pipelines lead to unreliable or delayed data, which can cause missed opportunities, poor decision-making, and lost revenue. Ensuring pipelines are reliable and monitored proactively prevents costly data issues from impacting critical business operations.

Automation minimizes manual, error-prone tasks, freeing up resources for higher-value work. It also ensures consistency, improves efficiency, and supports scalability by reducing dependency on human oversight for repetitive processes.

By integrating Continuous Integration and Continuous Delivery (CI/CD) practices, teams can deploy changes quickly and safely while automatically testing for errors. This results in more stable pipelines, fewer bugs, and higher overall data quality.